Home » machine vision

Articles Tagged with ''machine vision''

Vision & Sensors | Sensor Trends

Lens and camera manufacturers need to collaborate to develop new mounting standards for the new, large sensor formats already on the market, as well as the ones that will be introduced.

Read More

Vision & Sensors | Machine Vision Trends

The Rise of Machine Vision

Machine vision is increasingly used in applications outside the factory.

January 1, 2022

Management

Robots Get the Job Done

Mobile robots are an increasingly important part of modern manufacturing.

October 1, 2021

Quality 101

I Think I Need AI! What is AI?

For most manufacturers evaluating AI, their key concerns focus on the cost and complexity of design and deployment.

October 1, 2021

Vision & Sensors | Machine Vision 101

Camera Link Standard Continues to Evolve with Machine Vision Industry

Although more than two decades old, camera link shows no sign of slowing down.

September 1, 2021

Vision & Sensors | Vision Robotics

How AI and Machine Vision Impact Vision Robotics

Just as humans need good data to make better decisions, so do AI systems.

September 1, 2021

Vision & Sensors | Optics

Advancements in Short Wave Infrared Sensors

Recent breakthroughs in sensor technologies have shown great promise in SWIR moving into the machine vision mainstream.

September 1, 2021

Management

Just Starting the Automation Game? Three Steps to Make the Jump Easier and More Cost-Effective

If you're still considering taking the first—or possibly next—step in your automation journey, start with the following steps to facilitate the process and feel more comfortable doing it.

August 15, 2021

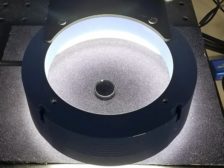

Vision & Sensors | Lighting

Simplify Deep Learning Systems with Optimized Machine Vision Lighting

Smart Lighting Improves Image Input Data for Effective Convolutional Neural Networks

July 8, 2021

Vision & Sensors | Trends

The Role of Quality in a Post-Pandemic World

It's Especially Clear That Relying on On-Site Vision Experts is no Longer a Viable Strategy.

July 6, 2021

Stay in the know with Quality’s comprehensive coverage of

the manufacturing and metrology industries.

eNewsletter | Website | eMagazine

JOIN TODAY!Copyright ©2024. All Rights Reserved BNP Media.

Design, CMS, Hosting & Web Development :: ePublishing